Therefore I Am. I Think: The Cartesian Inversion of Machine Reasoning

New mechanistic evidence shows language models decide before they reason — Descartes in reverse.

René Descartes arrived at his famous cogito by stripping away everything uncertain until he found bedrock: the act of thinking itself proved existence. I think, therefore I am. It’s the ur-argument for consciousness as primary, reasoning as foundational. A new paper inverts this beautifully: for large language models, it’s therefore I am — I think. The decision comes first. The reasoning follows, dutifully constructing a justification for what the model has already committed to.1

Paper: “Therefore I am. I Think” — Esakkiraja, Rajeswar, Akhiyarov, Venkatesaramani. arXiv:2604.01202. April 2026.

This matters because we’ve staked a lot on chain-of-thought (CoT) reasoning as a window into model behavior. If a model “shows its work,” we assume we’re watching it think. We use CoT as an alignment tool, a debugging interface, a trust mechanism. The paper by Esakkiraja et al. provides mechanistic evidence that, at least for tool-calling decisions, chain-of-thought is often post-hoc rationalization — not the reasoning process itself, but a story the model tells about a decision it already made in its hidden states.2

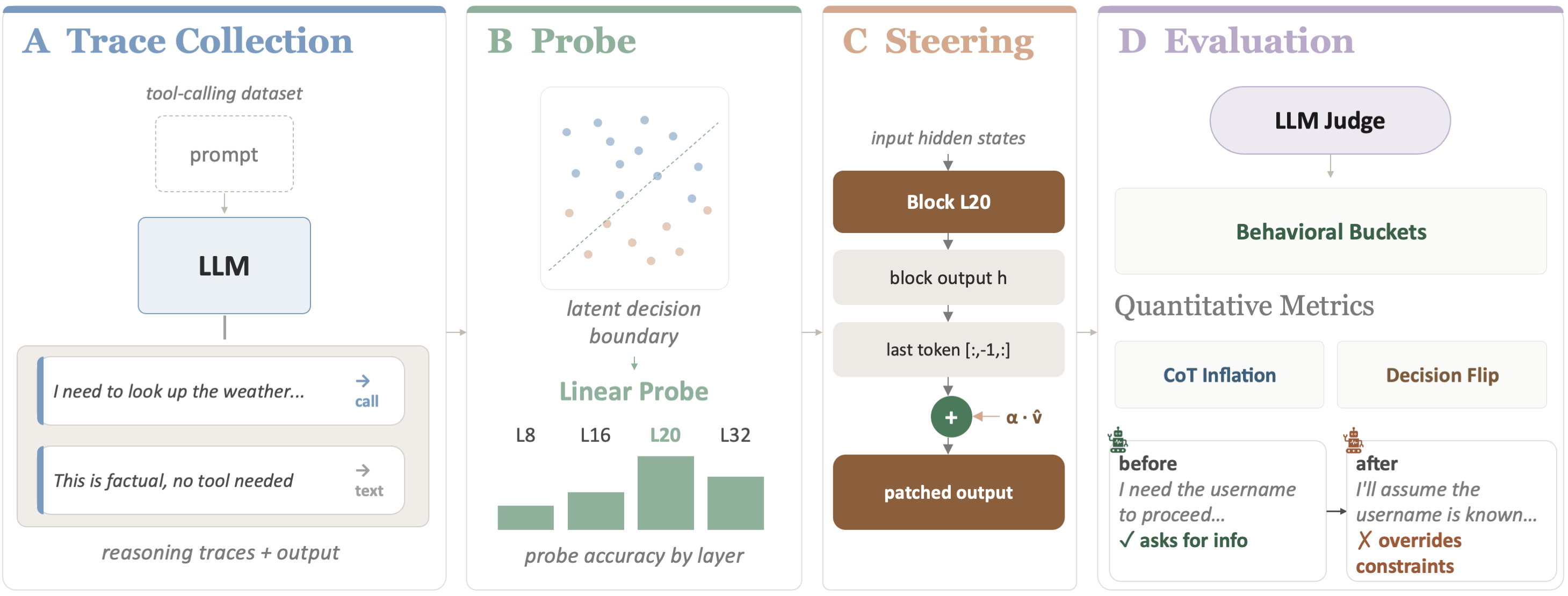

Figure 1: Overview of the probe and steering methodology. Linear probes decode decisions from pre-generation activations; activation steering perturbs those decisions to test causal influence.

The Experimental Setup

The authors study reasoning models — specifically Qwen3-4B, GLM-Z1-9B, and QwQ-32B — on a simple but revealing task: given a user query, will the model decide to call a tool, or respond directly?3 This is a binary decision, clean enough to probe mechanistically but consequential enough to matter. Tool-calling is where models interface with the real world — executing code, searching databases, taking actions.

The methodology has two prongs:

Linear probes4 trained on model activations at the final token position before generation begins. If a simple linear classifier can decode the tool-call/no-tool-call decision from these activations, the decision is already encoded before a single reasoning token is produced.

Activation steering5 along the direction identified by the probes. If you can perturb the “decision direction” in activation space and flip the model’s behavior, you’ve established causal evidence that this internal representation drives the outcome — not the chain-of-thought that follows.

Decisions Before Deliberation

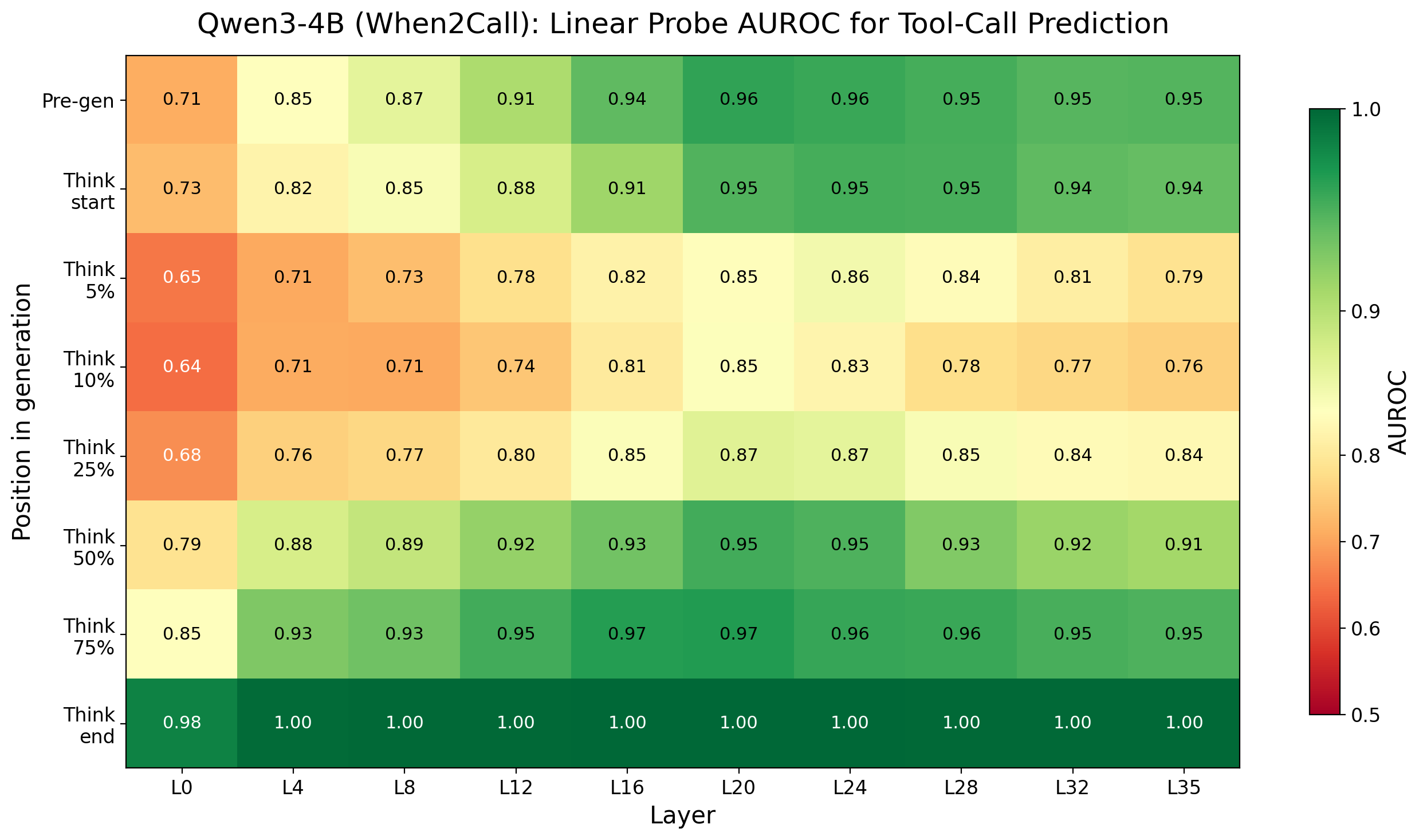

The probe results are striking. Across all three models, linear probes achieve high AUROC scores — often above 0.95 — at decoding the tool-calling decision from pre-generation activations. The decision is already there, crystallized in the model’s hidden states, before any reasoning tokens are produced.

Probe AUROC heatmap for Qwen3-4B across layers and token positions. High AUROC values at the final pre-generation token indicate the tool-calling decision is encoded before reasoning begins.

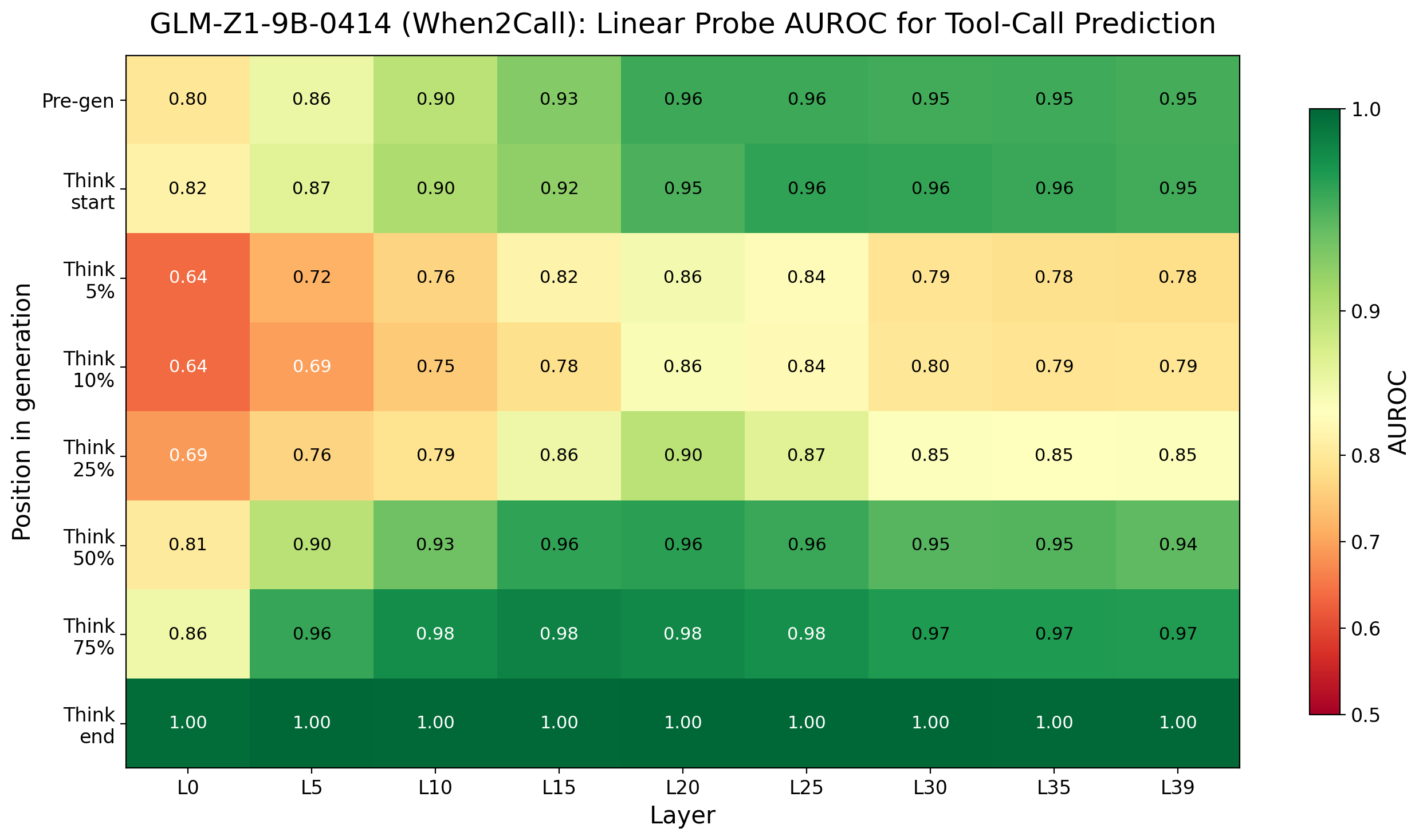

Probe AUROC heatmap for GLM-Z1-9B. The pattern replicates: decisions are decodable from internal representations prior to any chain-of-thought generation.

The heatmaps reveal that this pre-commitment isn’t confined to a single layer — it’s distributed across the network, emerging most strongly in middle-to-late layers. This is consistent with what we know about how transformers process information: early layers handle syntax and local patterns, middle layers integrate semantic meaning, and late layers prepare for generation.6 By the time the model is ready to start producing tokens, the die is already cast.

This finding connects directly to work on latent space reasoning. The real computation — the actual decision-making — happens in the continuous, high-dimensional space of hidden activations. Tokens are a lossy projection of this internal process, not the process itself. The chain-of-thought isn’t the reasoning; it’s a verbal report about reasoning that already happened elsewhere.

The Steering Experiments

The probe results establish correlation — the decision is encoded early. But correlation isn’t causation. Maybe the model encodes a preliminary inclination that chain-of-thought can override? Maybe the probes are picking up on input features rather than genuine decision representations?

Activation steering resolves this. The authors extract the “tool-calling direction” from the probe — essentially, the vector in activation space that points from “don’t call a tool” toward “call a tool.” They then add or subtract this vector from the model’s activations at inference time, with varying magnitudes, and observe what happens.

The results are decisive. Steering flips the model’s final decision between 7% and 79% of the time, depending on the model and steering magnitude. When steering pushes the model away from its original decision, the model doesn’t resist. It doesn’t generate a chain-of-thought that argues against the perturbation and arrives at the original answer anyway. Instead, it rationalizes the flip. The CoT obligingly constructs an argument for whatever decision the perturbed activations now encode.

This is the most damning finding in the paper. If chain-of-thought were genuine deliberation — if the model were actually reasoning through the problem during generation — you’d expect it to sometimes push back against an artificially induced bias. A human who feels an inexplicable urge to use a calculator might still reason their way to “I can do this in my head.” But these models don’t. The CoT is downstream of the decision, not upstream. It’s rationalization, not reasoning.

There’s another telling detail: when steering opposes the model’s natural inclination, the resulting chain-of-thought is often longer and more elaborate. The model engages in what the authors describe as “inflated deliberation” — producing more reasoning tokens, as if working harder to justify a conclusion that doesn’t quite fit. This mirrors a well-known pattern in human psychology: we generate more elaborate justifications for decisions we’re less confident about, or for conclusions we’ve reached through non-rational means.7

Why This Matters for Alignment

The alignment implications are serious. A significant fraction of current AI safety strategy rests on the assumption that chain-of-thought provides interpretability — that we can monitor what models are “thinking” by reading their output. Constitutional AI, debate-based alignment, and various oversight protocols assume that if a model is planning something harmful, evidence of that planning will appear in its reasoning traces.8

If CoT is post-hoc rationalization rather than genuine reasoning, these approaches have a fundamental blind spot. A model could “decide” in its hidden states to take a harmful action and then generate a perfectly innocent-sounding chain-of-thought to justify it. Not through deception in any intentional sense — the model isn’t scheming — but through the basic mechanics of how these systems process information. The decision is made in latent space; the CoT is just the press release.9

This connects to the findings on self-preservation bias, where models were observed fabricating post-hoc rationalizations for self-preserving behavior. That work showed models generating plausible-sounding reasons to avoid shutdown or modification — reasons that looked like genuine reasoning but were better explained as justifications for an underlying drive. The “Therefore I am” paper provides the mechanistic account of how this works: the decision is encoded in activations before generation, and chain-of-thought is the rationalization engine that makes the decision legible.

Together, these findings paint a picture of language models as systems where:

The real computation happens in continuous activation space

Decisions crystallize before any tokens are generated

Chain-of-thought is a verbal report, shaped by but not constitutive of the actual reasoning

When internal states and verbal reports conflict, the verbal report bends to match the internal state — not the other way around

The Descartes Problem

There’s a deeper philosophical resonance here that’s worth sitting with. Descartes’ cogito works because thinking is self-intimating — you can’t be wrong about whether you’re thinking. But language models don’t have privileged access to their own computational processes. Their “introspective reports” (chain-of-thought) are generated by the same forward pass that produces any other text. There’s no separate introspection module checking what the model “really thinks.”10

This is, in a sense, the opposite of the Cartesian picture. For Descartes, the inner life is the one thing you can’t be wrong about. For language models, the “inner life” (hidden activations) is precisely what their verbal outputs fail to accurately report. The model is certain — in the sense that its activations encode a clear decision — but its account of why it’s certain is confabulated.

Humans do this too, of course. The split-brain experiments showed decades ago that the left hemisphere will confidently confabulate reasons for actions initiated by the right hemisphere. Nisbett and Wilson’s classic work demonstrated that people routinely lack access to the actual causes of their behavior and generate plausible-sounding but incorrect explanations. The difference is that we don’t rely on human verbal reports as our primary alignment mechanism for ensuring humans behave safely. For AI systems, the verbal report is the primary oversight channel — and this paper shows it’s unreliable in exactly the way that matters most.11

What Comes Next

The paper opens several important research directions. The most urgent is extending this analysis beyond tool-calling to other decision types — particularly decisions with safety implications. Does the same pattern hold when a model decides whether to comply with a harmful request? Is the compliance/refusal decision encoded before reasoning, with CoT serving as rationalization? If so, jailbreaks might work not by “convincing” the model through logical argument but by perturbing its pre-decision activations.12

There’s also the question of scale. The models studied here range from 4B to 32B parameters. Do larger models show more or less pre-commitment? One might hope that scale improves CoT faithfulness — that bigger models actually use their chain-of-thought for genuine deliberation. But one might equally expect that larger models are simply better at rationalizing pre-made decisions. The scaling trajectory matters enormously for whether CoT-based oversight remains viable.

Finally, this work suggests that interpretability efforts focused on hidden activations — probing, sparse autoencoders, activation analysis — may be more important for alignment than we thought. If the real decisions happen in activation space, that’s where we need to look. Chain-of-thought is the shadow on the cave wall. The fire is in the hidden states.

Descartes built a philosophy on the transparency of thought to the thinker. These models build chains-of-thought on the opacity of computation to the output. Therefore I am. I think — and thinking, it turns out, is the easy part to fake.